Live Preview

Live PreviewGLM-4.6V-Flash

Features

- Free & Open Source — Zero cost with full open-source availability

- 9B Parameters — Lightweight enough for consumer GPUs and edge devices

- Low Latency — Optimized for fast inference and real-time applications

- Multimodal — Vision and language understanding in one compact model

Use Cases

- Local AI — Run AI on your own hardware without cloud costs

- Mobile/Edge — Deploy on phones, tablets, and edge devices

- Prototyping — Rapid prototyping with zero API costs

FAQ

What is GLM-4.6V-Flash?

Lightweight 9B-parameter open-source multimodal vision-language model optimized for local deployment, low-latency inference, and edge/consumer hardware.

How much does GLM-4.6V-Flash cost?

Visit the official GLM-4.6V-Flash website for the most up-to-date pricing.

What are the key features of GLM-4.6V-Flash?

GLM-4.6V-Flash includes features such as: [object Object], [object Object], [object Object], [object Object].

What can I use GLM-4.6V-Flash for?

Common use cases for GLM-4.6V-Flash include: [object Object], [object Object], [object Object].

Related Tools

Tools with similar capabilities you might also like

This innovative design allows the model to process an impressive 1 million tokens, setting a new standard for long-context understanding among large-scale found

With features like advanced reasoning, vision analysis, and code generation, it caters to a wide range of professional needs. The advanced reasoning capability

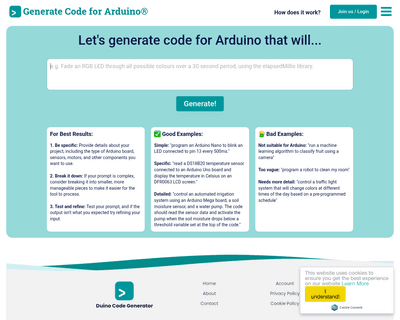

By leveraging advanced AI technology, specifically the GPT-4 model, the platform allows users to input instructions in plain English, which are then converted i

By automating the creation of clinical notes, it allows professionals to focus more on patient care rather than paperwork. The tool employs advanced speech-to-t

By offering embeddable AI widgets that can be integrated seamlessly through HTML code, users can enhance their websites quickly and efficiently. This accessibil

By integrating Backend as a Service (BaaS) with LLMOps, it provides a solid infrastructure that simplifies the complexities of AI application development. The i