Live Preview

Live PreviewGgml

Features

- C Implementation — GGML is written in C, ensuring both efficiency and portability for various applications.

- 16-bit Float Support — The library supports 16-bit floating-point numbers, optimizing performance and memory usage.

- Integer Quantization — GGML facilitates integer quantization (4-bit, 5-bit, 8-bit), which reduces memory usage and speeds up inference.

- Automatic Differentiation — It includes capabilities for automatic differentiation, making gradient-based optimization easier.

- Optimizers — The library comes with built-in optimizers like ADAM and L-BFGS, enhancing model training efficiency.

- Apple Silicon Optimization — GGML is specifically optimized for performance on Apple Silicon, ensuring high efficiency on compatible hardware.

FAQ

What is Ggml?

Its design prioritizes efficiency, allowing users to deploy large models effectively on standard hardware. The library's C implementation ensures portability, while features like 16-bit floating-point support and integer quantization help optimize memory usage and enhance inference speed. With built

How much does Ggml cost?

Visit the official Ggml website for the most up-to-date pricing.

What are the key features of Ggml?

Ggml includes features such as: [object Object], [object Object], [object Object], [object Object], [object Object].

Related Tools

Tools with similar capabilities you might also like

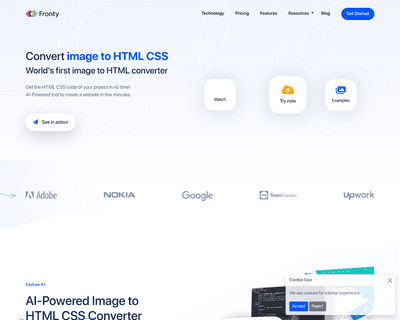

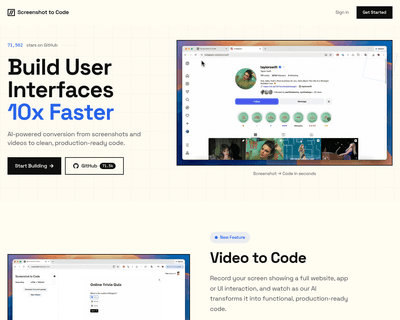

By allowing users to upload screenshots of their designs, it quickly generates the corresponding HTML and CSS code, saving considerable time and effort. The pla

Unlike many existing platforms that struggle with this integration, Ideogram AI delivers visually appealing results that maintain coherence between the textual

With real-time code completion suggestions and enhanced recommendations based on code comments, it helps streamline the coding process while minimizing potentia

By supporting a range of frameworks and libraries, including HTML, Tailwind CSS, React, Vue, and Bootstrap, it caters to a diverse audience of developers and de

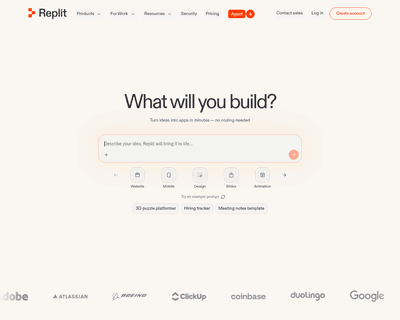

Users can input detailed descriptions of their app concepts and receive a functional prototype in just 30 seconds, a remarkable feat that significantly reduces

Its user-friendly interface simplifies the writing process, while features like dynamic syntax highlighting enhance readability and facilitate quick debugging.